The Google Drive app starts and a moment later your files appear. It's magic. But have you ever wondered what's your files actually? How do these services actually know, which files you are allowed to see?

Are you part of an Organization and you are allowed to view all their files? Have you been assigned to a Team, that's allowed to view or edit files? Has someone shared their files with you as a User?

So in 2019 Google has lifted the curtain and has published a paper on Google Zanzibar, which is Google's central solution for providing authorization among its many services:

The keyword here is Relationship-based Access Control, which is ...

[...] an authorization paradigm where a subject's permission to access a resource is defined by the presence of relationships between those subjects and resources.

I have previously written an article about the Google Zanzibar Data Model, and also wrote some pretty nice SQL statements to make sense of the it. This article will make use of the ideas and queries, and takes a look at implementing Relationship-based Access Control using Microsoft SQL Server and ASP.NET Core.

All code in this article can be found in a repository at:

Table of contents

- Table of contents

- Role-based and Relationship-based ACL

- What we are going to build

- Database Design

- ASP.NET Core Backend with Relationship-based Access Control

- Running an Example with the Sample Data

- Conclusion

Role-based and Relationship-based ACL

We are going to build a tiny part of a Task Management system.

Tasks are basically everywhere in an organization, such as having tasks for signing documents, calling back customers or reminders to write invoices. They are a good example use case for authorization.

The situation for such a Task Management system is somewhat similar to the Google Drive example. You obviously don't want an entire organization to view, edit, delete or close all tasks. Given a sufficiently large headcount in a company, unauthorized access would quickly escalate into a chaos.

Role-based Access Control (RBAC)

One way to authorize a user is by using Role-based Access Control (RBAC).

Role-based Access Control is definitely among the most popular models for defining permissions and authorizing access to an organizations resources, such as our tasks. Highly simplified, a user is being assigned to a set of roles, where each role represents the users role within the organization.

In our Task Management system, a regular user might be able to view tasks, while it requires elevated rights to actually delete a task. Likewise a user being assigned to the role Software Development should probably not be permitted to update tasks created by the Human Resources people.

That said, there was recently a great Microsoft DevBlogs article by Stewart Adam, that discusses designing Role-based Access Control for applications and it's a great read. It discusses quite a similar use case and comes up with some solutions:

As you can see in the article, a Role-based Access Control can get very complex, very quickly. "Subtree grants", "Entity Graph Scopes", "Nested Roles", "Permission Wildcards", ... it sounds great in theory, but sadly none of it is illustrated with actual code, and more importantly none of this exists in ASP.NET Core.

In my experience Role-based Access Control can take you very, very far. And it works great, as long as an organization strictly adheres to the roles defined. But as soon as you need a more fine-grained control, you are out of luck with Roles.

Many, many projects taught me, that there is always a special snowflake, that doesn't fit the defined roles and needs a special treatment. This may lead to an explosion in roles, if you don't have compensation strategies as mentioned in "Authoring an RBAC API for your application (by Stewart Adam)".

Relationship-based Access Control (ReBAC)

One way for having fine-grained Access Control is to employ something similar to Google Zanzibar. Google Zanzibar was first described by Google in a 2019 paper called Zanzibar: Google’s Consistent, Global Authorization System and the paper is available for download at:

It describes the Google's motivation for building a unified authorization system and describes the data model, language and its API. I think there are many excellent sources, that explain Google Zanzibar in detail and much better, than I could:

- Exploring Google Zanzibar: A Demonstration of Its Basics (by Ege Aytin)

- Building Zanzibar from Scratch (by Sam Scott)

- Zanzibar-style ACLs with Prolog (by Radek Gruchalski)

Highly, highly simplified, Google Zanzibar models relationships between Objects and Subjects using the following notation:

<object>#<relation>@<subject>#<subject_relation>

Say we have our system to manage tasks, then we could make up relations like this with the Google Zanzibar syntax:

task323#owner@philipp

task323#viewer@org1#member

task152#viewer@org1#member

task152#viewer@org2#member

org1#member@philipp

org1#member@max

org2#member@james

Where ...

task323#owner@philippphilippis theowneroftask323.

task323#viewer@org1#membermembersoforg1arevieweroftask323

task152#viewer@org1#membermembersoforg1arevieweroftask152

task152#viewer@org2#membermembersoforg2arevieweroftask323

org1#member@philippphilippis amemberoforg1

org1#member@maxmaxis amemberoforg1

org2#member@jamesjamesis amemberoforg1

We could build a Role-based Access Control upon the Google Zanzibar data model, something that's already been noted in Google's original paper ...

[...] A number of Zanzibar clients have implemented RBAC policies on top of Zanzibar’s namespace configuration language. [...]

So using Role-based Access Control and Relationship-based Access Control is by no means a mutually exclusive decision. You can

easily build a Role-based Access Control model upon Relationship-based Access Control... use what's best for your use case. As

for ASP.NET Core, I imagine protecting the Controller with Roles and have Fine-Grained Access Control on Service-Level using

Relationships.

What we are going to build

We will build a small part of a Task Management System using ASP.NET Core and EntityFramework Core. The idea is to wrap the Check API and ListObjects API, developed in the previous Google Zanzibar article, with EntityFramework Core and integrate it into the ASP.NET Core pipeline.

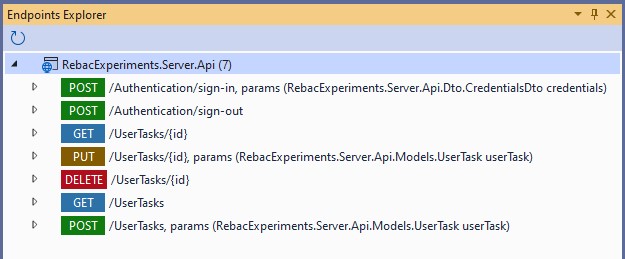

At the end of this article we will have a RESTful API, that authorizes a user using a Relationship-based Access

Control. We will see how to create the database, configure logging, run integration tests and use .http files

for manual endpoint tests in Visual Studio.

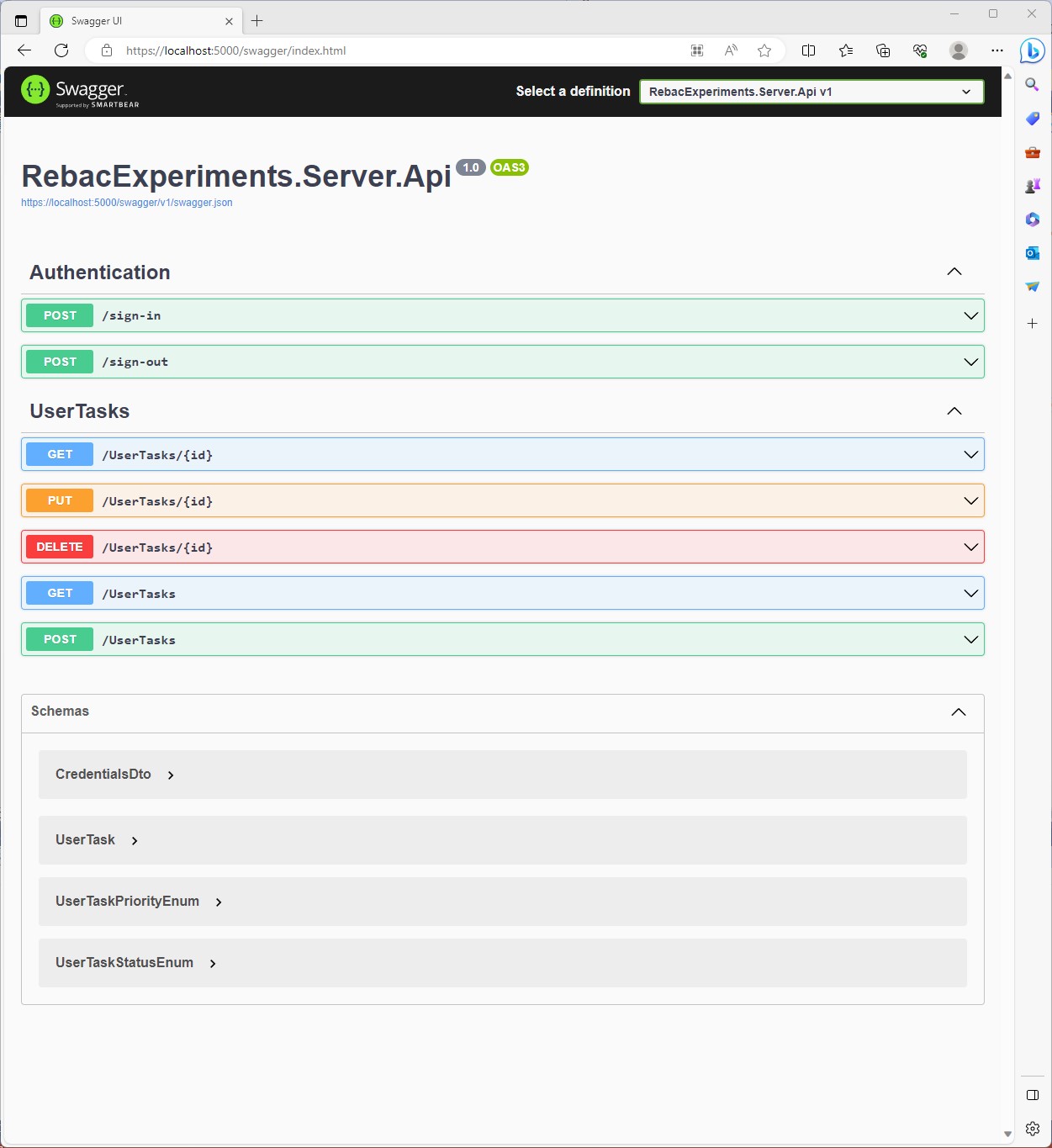

Here is the Swagger Overview for the final API Endpoints.

Database Design

If you are going to work with a relational database, then you should put all your database objects in version control. The best example for a SQL Server Database Project (SSDP) available out there is the "WideWorldImporters OLTP Database" example provided in the SQL Server examples repository:

Prerequisites

Project Structure

Before starting any database application, a team should agree on structure and naming conventions. You'll need to have a consistent style from the very start. Everyone has to know where to put files and the naming conventions to apply.

The high level structure for our SQL Server Database Project uses the [WideWorldImporters OLTP Database] structure:

«Schema»Function- Functions go here ...

Indexes- Indexes go here ...

Sequences- Sequences go here ...

Stored Procedures- Stored Procedures go here ...

Tables- Tables go here ...

Views- Views go here ...

«Schema».sql- Creates the Schema

Scripts«Schema»- Deployment Scripts for a Schema go here ...

Script.PostDeployment1.sql- Post Deployment Script to execute SQL scripts go here ...

SQL Server Object Name Convention

What's a "Naming Convention"?

A Naming convention is a set of rules for choosing the character sequence to be used for identifiers which denote variables, types, functions, and other entities in source code and documentation.

Reasons for using a naming convention (as opposed to allowing programmers to choose any character sequence) include the following:

- To reduce the effort needed to read and understand source code.

- To enable code reviews to focus on more important issues than arguing over syntax and naming standards.

- To enable code quality review tools to focus their reporting mainly on significant issues other than syntax and style preferences.

For SQL Server the following table is a good start.

| Object | Size | Plural | Prefix | Suffix | Example |

|---|---|---|---|---|---|

| Database | 30 | No | No | No | MyDatabase |

| Schema | 30 | No | No | No | MySchema |

| Global Temporary Table | 117 | No | No | No | ##MyTable |

| Local Temporary Table | 116 | No | No | No | #MyTable |

| File Table | 128 | No | FT_ | No | FT_MyTable |

| Temporal Table | 128 | No | No | History | MyTableHistory |

| Table Column | 128 | No | No | No | MyColumn |

| Columns Check Constraint | 128 | No | CTK_ | No | CTK_MyTable_Col1_Col2 |

| Column Check Constraint | 128 | No | CK_ | No | CK_MyTable_Col |

| Column Default Values | 128 | No | DF_ | No | DF_MyTable_Col |

| Table Primary Key | 128 | No | PK_ | No | PK_MyTable |

| Table Alternative Key | 128 | No | AK_ | No | AK_MyTable_Col1_Col2 |

| Table Foreign Key | 128 | No | FK_ | No | FK_MyTable_Col_Table_Col |

| Table Clustered Index | 128 | No | IXC_ | No | IXC_MyTable_Col1_Col2 |

| Table Non Clustered Index | 128 | No | IX_ | No | IX_MyTable_Col1_Col2 |

| Table Unique Index | 128 | No | UX_ | No | UX_MyTable_Col1_Col2 |

| DDL Trigger | 128 | No | TR_ | _DDL | TR_LogicalName_DDL |

| DML Trigger | 128 | No | TR_ | _DML | TR_MyTable_LogicalName_DML |

| Logon Trigger | 128 | No | TR_ | _LOG | TR_LogicalName_LOG |

| View | 128 | No | VI_ | No | VI_LogicalName |

| Indexed View | 128 | No | VIX_ | No | VIX_LogicalName |

| Statistic | 128 | No | ST_ | No | ST_MyTable_Col1_Col2 |

| Stored Procedure | 128 | No | usp_ | No | usp_LogicalName |

| Scalar User-Defined Function | 128 | No | udf_ | No | udf_FunctionLogicalName |

| Table-Valued Function | 128 | No | tvf_ | No | tvf_FunctionLogicalName |

| Sequence | 128 | No | sq_ | No | sq_TableName |

Auditing and Optimistic Locking

In my experience every database table should support optimistic locking and auditing baked in from the start, because:

- You'll always need a way to recover from accidental data changes or application errors.

- You'll always need a way to audit who was responsible for changes to the data.

- You'll always need a way to prevent applications from overriding each others data.

So as a convention every table in our application gets the following 4 additional columns:

| Column Name | Data Type | Allow NULL | Description |

|---|---|---|---|

| RowVersion | ROWVERSION | Yes | A Version automatically generated by SQL Server, to serve as a Concurrency Token) |

| LastEditedBy | INT | No | A Foreign Key to a `User` to track changes to the data |

| ValidFrom | DATETIME2(7) | No | Period start column: The system records the start time for the row in this column |

| ValidTo | DATETIME2(7) | No | Period end column: The system records the end time for the row in this column |

They don't hurt and they might turn out very useful. And if you don't need a history? Then just deactivate the Temporal Table and call it a day!

Temporal Tables

Few developers know about Temporal tables, also known as ...

[...] system-versioned temporal tables, are a database feature that brings built-in support for providing information about data stored in the table at any point in time, rather than only the data that is correct at the current moment in time.

Why do you want to use a Temporal Table at all?

Real data sources are dynamic and more often than not business decisions rely on insights that analysts can get from data evolution. Use cases for temporal tables include:

- Auditing all data changes and performing data forensics when necessary

- Reconstructing state of the data as of any time in the past

- Calculating trends over time

- Maintaining a slowly changing dimension for decision support applications

- Recovering from accidental data changes and application errors

It's easy to enable system versioning in Microsoft SQL Server, like we are doing for a [Application].[UserTask] table,

that's going to hold all Tasks assigned to a user:

CREATE TABLE [Application].[UserTask](

[UserTaskID] INT CONSTRAINT [DF_Application_UserTask_UserTaskID] DEFAULT (NEXT VALUE FOR [Application].[sq_UserTask]) NOT NULL,

[Title] NVARCHAR(50) NOT NULL,

[Description] NVARCHAR(2000) NOT NULL,

-- ...

[RowVersion] ROWVERSION NULL,

[LastEditedBy] INT NOT NULL,

[ValidFrom] DATETIME2 (7) GENERATED ALWAYS AS ROW START NOT NULL,

[ValidTo] DATETIME2 (7) GENERATED ALWAYS AS ROW END NOT NULL,

CONSTRAINT [PK_UserTask] PRIMARY KEY ([UserTaskID]),

-- ...

CONSTRAINT [FK_UserTask_User_LastEditedBy] FOREIGN KEY ([LastEditedBy]) REFERENCES [Identity].[User] ([UserID]),

CONSTRAINT [FK_UserTask_User_AssignedTo] FOREIGN KEY ([AssignedTo]) REFERENCES [Identity].[User] ([UserID]),

PERIOD FOR SYSTEM_TIME (ValidFrom, ValidTo)

) WITH (SYSTEM_VERSIONING = ON (HISTORY_TABLE = [Application].[UserTaskHistory]));

You can learn everything about Temporal Tables in the SQL Server documentation at:

Like everything in software engineering, temporal tables do not magically solve all problems and are a trade-off. You might run into problems on high volume datasets. You have to deactivate temporal tables before migrations and probably need to put it into single user mode during schema migrations.

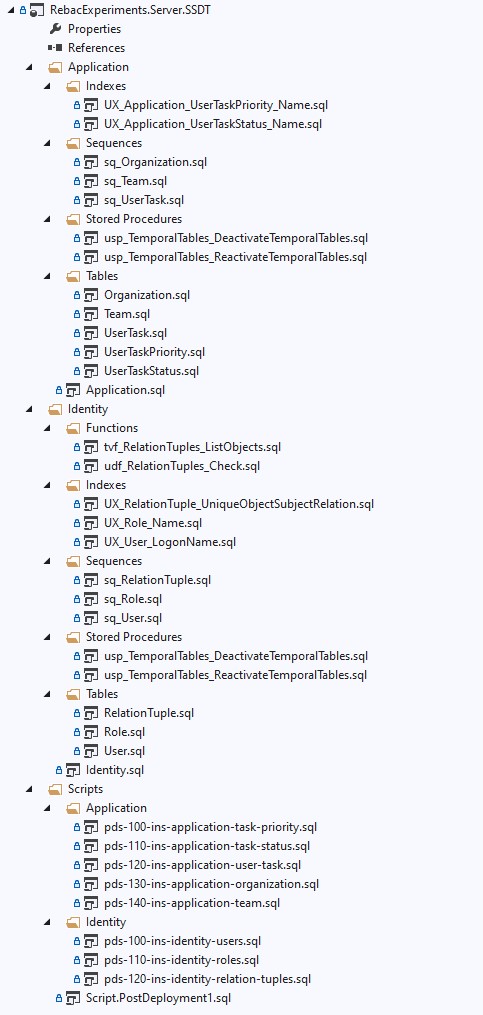

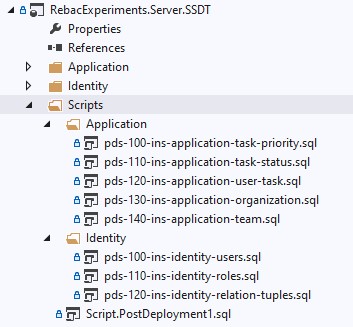

Database Project Overview

It's a good idea to get an overview for our database project first. We create a SQL Server Database Project (SSDP), call

it RebacExperiments.Server.SSDT and put all our Database Objects and Scripts into it.

There are two schemas named [Identity] and [Application]. The [Identity] schema, surprise, is going to hold all identity

related stuff, such as a User, a Role and the RelationTuple for defining the relationships between entities. It's also

going to hold the functions to list objects and check for permissions.

The [Application] schema is going to hold everything not directly related to the Identity management, such as UserTask,

Organization, Team, ... entities. The [Application] schema takes a hard dependency on the [Identity] schema, because

we refer to the [Identity].[User] table with a Foreign Key Constraint.

Schema "Identity"

Sequences

We are using Sequences instead of an Auto-Incrementing Primary Key. A Sequence has some advantadges, such as setting explicit start and increment values. And what's particularly interesting to us is, that we can use the Sequence to implement the Hi-Lo Pattern in code.

The EntityFramework documentation has the following to say about the Hi/Lo Algorithm:

The Hi/Lo algorithm is useful when you need unique keys before committing changes. As a summary, the Hi-Lo algorithm assigns unique identifiers to table rows while not depending on storing the row in the database immediately. This lets you start using the identifiers right away, as happens with regular sequential database IDs.

We define our sequences for all tables in their respective files as:

CREATE SEQUENCE [Identity].[sq_User]

AS INT

START WITH 38187

INCREMENT BY 1;

CREATE SEQUENCE [Identity].[sq_Role]

AS INT

START WITH 38187

INCREMENT BY 1;

CREATE SEQUENCE [Identity].[sq_RelationTuple]

AS INT

START WITH 38187

INCREMENT BY 1;

Tables

The application has a very simple User model. A user may be permitted to Logon using a Logon Name, the Logon Name

is unique among all users. The password hashing is done on application-level, when logging into the system or

registering users.

CREATE TABLE [Identity].[User](

[UserID] INT CONSTRAINT [DF_Identity_User_UserID] DEFAULT (NEXT VALUE FOR [Identity].[sq_User]) NOT NULL,

[FullName] NVARCHAR(50) NOT NULL,

[PreferredName] NVARCHAR(50) NULL,

[IsPermittedToLogon] BIT NOT NULL,

[LogonName] NVARCHAR (256) NULL,

[HashedPassword] NVARCHAR (MAX) NULL,

[RowVersion] ROWVERSION NULL,

[LastEditedBy] INT NOT NULL,

[ValidFrom] DATETIME2 (7) GENERATED ALWAYS AS ROW START NOT NULL,

[ValidTo] DATETIME2 (7) GENERATED ALWAYS AS ROW END NOT NULL,

CONSTRAINT [PK_User] PRIMARY KEY ([UserID]),

CONSTRAINT [FK_User_LastEditedBy_User_UserID] FOREIGN KEY ([LastEditedBy]) REFERENCES [Identity].[User] ([UserID]),

PERIOD FOR SYSTEM_TIME (ValidFrom, ValidTo)

) WITH (SYSTEM_VERSIONING = ON (HISTORY_TABLE = [Identity].[UserHistory]));

To build a Role-based Access Control on top of the Relationship-based model, we add a table [Identity].[Role] to hold all roles available in our system.

CREATE TABLE [Identity].[Role](

[RoleID] INT CONSTRAINT [DF_Identity_Role_RoleID] DEFAULT (NEXT VALUE FOR [Identity].[sq_Role]) NOT NULL,

[Name] NVARCHAR(255) NOT NULL,

[Description] NVARCHAR(2000) NULL,

[RowVersion] ROWVERSION NULL,

[LastEditedBy] INT NOT NULL,

[ValidFrom] DATETIME2 (7) GENERATED ALWAYS AS ROW START NOT NULL,

[ValidTo] DATETIME2 (7) GENERATED ALWAYS AS ROW END NOT NULL,

CONSTRAINT [PK_Role] PRIMARY KEY ([RoleID]),

CONSTRAINT [FK_Role_LastEditedBy_User_UserID] FOREIGN KEY ([LastEditedBy]) REFERENCES [Identity].[User] ([UserID]),

PERIOD FOR SYSTEM_TIME (ValidFrom, ValidTo)

) WITH (SYSTEM_VERSIONING = ON (HISTORY_TABLE = [Identity].[RoleHistory]));

The table [Identity].[RelationTuple] is the secret sauce here, that's going to be at the heart of the Relationship-based Access Control. As

you can see, the [ObjectKey] and [SubjectKey] has been defined as an INT column type, so we make the assumption, that all tables in our

database have INT Primary Key.

CREATE TABLE [Identity].[RelationTuple](

[RelationTupleID] INT CONSTRAINT [DF_Identity_RelationTuple_RelationTupleID] DEFAULT (NEXT VALUE FOR [Identity].[sq_RelationTuple]) NOT NULL,

[ObjectKey] INT NOT NULL,

[ObjectNamespace] NVARCHAR(50) NOT NULL,

[ObjectRelation] NVARCHAR(50) NOT NULL,

[SubjectKey] INT NOT NULL,

[SubjectNamespace] NVARCHAR(50) NOT NULL,

[SubjectRelation] NVARCHAR(50) NULL,

[RowVersion] ROWVERSION NULL,

[LastEditedBy] INT NOT NULL,

[ValidFrom] DATETIME2 (7) GENERATED ALWAYS AS ROW START NOT NULL,

[ValidTo] DATETIME2 (7) GENERATED ALWAYS AS ROW END NOT NULL,

CONSTRAINT [PK_RelationTupleID] PRIMARY KEY ([RelationTupleID]),

CONSTRAINT [FK_RelationTuple_LastEditedBy_User_UserID] FOREIGN KEY ([LastEditedBy]) REFERENCES [Identity].[User] ([UserID]),

PERIOD FOR SYSTEM_TIME (ValidFrom, ValidTo)

) WITH (SYSTEM_VERSIONING = ON (HISTORY_TABLE = [Identity].[RelationTupleHistory]));

Functions

The Google Zanzibar "Check API" is implemented by the User Defined Function [Identity].[udf_RelationTuples_Check], which

is explained in great detail in my article Exploring Relationship-based Access Control (ReBAC) with Google Zanzibar.

CREATE FUNCTION [Identity].[udf_RelationTuples_Check]

(

@ObjectNamespace NVARCHAR(50)

,@ObjectKey INT

,@ObjectRelation NVARCHAR(50)

,@SubjectNamespace NVARCHAR(50)

,@SubjectKey INT

)

RETURNS BIT

AS

BEGIN

DECLARE @IsAuthorized BIT = 0;

WITH RelationTuples AS

(

SELECT

[RelationTupleID]

,[ObjectNamespace]

,[ObjectKey]

,[ObjectRelation]

,[SubjectNamespace]

,[SubjectKey]

,[SubjectRelation]

, 0 AS [HierarchyLevel]

FROM

[Identity].[RelationTuple]

WHERE

[ObjectNamespace] = @ObjectNamespace AND [ObjectKey] = @ObjectKey AND [ObjectRelation] = @ObjectRelation

UNION All

SELECT

r.[RelationTupleID]

,r.[ObjectNamespace]

,r.[ObjectKey]

,r.[ObjectRelation]

,r.[SubjectNamespace]

,r.[SubjectKey]

,r.[SubjectRelation]

,[HierarchyLevel] + 1 AS [HierarchyLevel]

FROM

[Identity].[RelationTuple] r, [RelationTuples] cte

WHERE

cte.[SubjectKey] = r.[ObjectKey]

AND cte.[SubjectNamespace] = r.[ObjectNamespace]

AND cte.[SubjectRelation] = r.[ObjectRelation]

)

SELECT @IsAuthorized =

CASE

WHEN EXISTS(SELECT 1 FROM [RelationTuples] WHERE [SubjectNamespace] = @SubjectNamespace AND [SubjectKey] = @SubjectKey)

THEN 1

ELSE 0

END;

RETURN @IsAuthorized;

END

And you want to answer questions like "What Tasks is a User allowed to see?", "What Organizations is a User

member of?". This can be done by using the ListObjects function, that has been written in a previous

article.

CREATE FUNCTION [Identity].[tvf_RelationTuples_ListObjects]

(

@ObjectNamespace NVARCHAR(50)

,@ObjectRelation NVARCHAR(50)

,@SubjectNamespace NVARCHAR(50)

,@SubjectKey INT

)

RETURNS @returntable TABLE

(

[RelationTupleID] INT

,[ObjectNamespace] NVARCHAR(50)

,[ObjectKey] INT

,[ObjectRelation] NVARCHAR(50)

,[SubjectNamespace] NVARCHAR(50)

,[SubjectKey] INT

,[SubjectRelation] NVARCHAR(50)

)

AS

BEGIN

WITH RelationTuples AS

(

SELECT

[RelationTupleID]

,[ObjectNamespace]

,[ObjectKey]

,[ObjectRelation]

,[SubjectNamespace]

,[SubjectKey]

,[SubjectRelation]

, 0 AS [HierarchyLevel]

FROM

[Identity].[RelationTuple]

WHERE

[SubjectNamespace] = @SubjectNamespace AND [SubjectKey] = @SubjectKey

UNION All

SELECT

r.[RelationTupleID]

,r.[ObjectNamespace]

,r.[ObjectKey]

,r.[ObjectRelation]

,r.[SubjectNamespace]

,r.[SubjectKey]

,r.[SubjectRelation]

,[HierarchyLevel] + 1 AS [HierarchyLevel]

FROM

[Identity].[RelationTuple] r, [RelationTuples] cte

WHERE

cte.[ObjectKey] = r.[SubjectKey]

AND cte.[ObjectNamespace] = r.[SubjectNamespace]

AND cte.[ObjectRelation] = r.[SubjectRelation]

)

INSERT

@returntable

SELECT DISTINCT

[RelationTupleID], [ObjectNamespace], [ObjectKey], [ObjectRelation], [SubjectNamespace], [SubjectKey], [SubjectRelation]

FROM

[RelationTuples]

WHERE

[ObjectNamespace] = @ObjectNamespace AND [ObjectRelation] = @ObjectRelation;

RETURN;

END

Stored Procedures

The tables are system versioned, but for inserting the initial data with explicit ValidFrom and ValidTo values,

we need to turn the versioning off. So we are adding a Stored Procedure [Identity].[usp_TemporalTables_DeactivateTemporalTables] to

deactivate the versioning.

CREATE PROCEDURE [Identity].[usp_TemporalTables_DeactivateTemporalTables]

AS BEGIN

IF OBJECTPROPERTY(OBJECT_ID('[Identity].[User]'), 'TableTemporalType') = 2

BEGIN

PRINT 'Deactivate Temporal Table for [Identity].[User]'

ALTER TABLE [Identity].[User] SET (SYSTEM_VERSIONING = OFF);

ALTER TABLE [Identity].[User] DROP PERIOD FOR SYSTEM_TIME;

END

IF OBJECTPROPERTY(OBJECT_ID('[Identity].[Role]'), 'TableTemporalType') = 2

BEGIN

PRINT 'Deactivate Temporal Table for [Identity].[Role]'

ALTER TABLE [Identity].[Role] SET (SYSTEM_VERSIONING = OFF);

ALTER TABLE [Identity].[Role] DROP PERIOD FOR SYSTEM_TIME;

END

IF OBJECTPROPERTY(OBJECT_ID('[Identity].[RelationTuple]'), 'TableTemporalType') = 2

BEGIN

PRINT 'Deactivate Temporal Table for [Identity].[RelationTuple]'

ALTER TABLE [Identity].[RelationTuple] SET (SYSTEM_VERSIONING = OFF);

ALTER TABLE [Identity].[RelationTuple] DROP PERIOD FOR SYSTEM_TIME;

END

END

After the data has been inserted, we want to reactivate the versioning again. So we are also adding a Stored Procedure

[Identity].[usp_TemporalTables_ReactivateTemporalTables], that restores the system versioning.

CREATE PROCEDURE [Identity].[usp_TemporalTables_ReactivateTemporalTables]

AS BEGIN

IF OBJECTPROPERTY(OBJECT_ID('[Identity].[User]'), 'TableTemporalType') = 0

BEGIN

PRINT 'Reactivate Temporal Table for [Identity].[User]'

ALTER TABLE [Identity].[User] ADD PERIOD FOR SYSTEM_TIME([ValidFrom], [ValidTo]);

ALTER TABLE [Identity].[User] SET (SYSTEM_VERSIONING = ON (HISTORY_TABLE = [Identity].[UserHistory], DATA_CONSISTENCY_CHECK = ON));

END

IF OBJECTPROPERTY(OBJECT_ID('[Identity].[Role]'), 'TableTemporalType') = 0

BEGIN

PRINT 'Reactivate Temporal Table for [Identity].[Role]'

ALTER TABLE [Identity].[Role] ADD PERIOD FOR SYSTEM_TIME([ValidFrom], [ValidTo]);

ALTER TABLE [Identity].[Role] SET (SYSTEM_VERSIONING = ON (HISTORY_TABLE = [Identity].[RoleHistory], DATA_CONSISTENCY_CHECK = ON));

END

IF OBJECTPROPERTY(OBJECT_ID('[Identity].[RelationTuple]'), 'TableTemporalType') = 0

BEGIN

PRINT 'Reactivate Temporal Table for [Identity].[RelationTuple]'

ALTER TABLE [Identity].[RelationTuple] ADD PERIOD FOR SYSTEM_TIME([ValidFrom], [ValidTo]);

ALTER TABLE [Identity].[RelationTuple] SET (SYSTEM_VERSIONING = ON (HISTORY_TABLE = [Identity].[RelationTupleHistory], DATA_CONSISTENCY_CHECK = ON));

END

END

Schema: Application

Tables

The Task Management Application deals with... Tasks. I named this a UserTask for the sole reason, that Task is

too ambigous in .NET and you'll often accidentally refer to a System.Threading.Task... without noticing.

CREATE TABLE [Application].[UserTask](

[UserTaskID] INT CONSTRAINT [DF_Application_UserTask_UserTaskID] DEFAULT (NEXT VALUE FOR [Application].[sq_UserTask]) NOT NULL,

[Title] NVARCHAR(50) NOT NULL,

[Description] NVARCHAR(2000) NOT NULL,

[DueDateTime] DATETIME2(7) NULL,

[ReminderDateTime] DATETIME2(7) NULL,

[CompletedDateTime] DATETIME2(7) NULL,

[AssignedTo] INT NULL,

[UserTaskPriorityID] INT NOT NULL,

[UserTaskStatusID] INT NOT NULL,

[RowVersion] ROWVERSION NULL,

[LastEditedBy] INT NOT NULL,

[ValidFrom] DATETIME2 (7) GENERATED ALWAYS AS ROW START NOT NULL,

[ValidTo] DATETIME2 (7) GENERATED ALWAYS AS ROW END NOT NULL,

CONSTRAINT [PK_UserTask] PRIMARY KEY ([UserTaskID]),

CONSTRAINT [FK_UserTask_UserTaskPriority_UserTaskPriorityID] FOREIGN KEY ([UserTaskPriorityID]) REFERENCES [Application].[UserTaskPriority] ([UserTaskPriorityID]),

CONSTRAINT [FK_UserTask_UserTaskStatus_UserTaskStatusID] FOREIGN KEY ([UserTaskStatusID]) REFERENCES [Application].[UserTaskStatus] ([UserTaskStatusID]),

CONSTRAINT [FK_UserTask_User_LastEditedBy] FOREIGN KEY ([LastEditedBy]) REFERENCES [Identity].[User] ([UserID]),

CONSTRAINT [FK_UserTask_User_AssignedTo] FOREIGN KEY ([AssignedTo]) REFERENCES [Identity].[User] ([UserID]),

PERIOD FOR SYSTEM_TIME (ValidFrom, ValidTo)

) WITH (SYSTEM_VERSIONING = ON (HISTORY_TABLE = [Application].[UserTaskHistory]));

A UserTask always has a priority assigned, so you know which task needs to be processed.

We add a table [Application].[UserTaskPriority].

CREATE TABLE [Application].[UserTaskPriority](

[UserTaskPriorityID] INT NOT NULL,

[Name] NVARCHAR(50) NOT NULL,

[RowVersion] ROWVERSION NULL,

[LastEditedBy] INT NOT NULL,

[ValidFrom] DATETIME2 (7) GENERATED ALWAYS AS ROW START NOT NULL,

[ValidTo] DATETIME2 (7) GENERATED ALWAYS AS ROW END NOT NULL,

CONSTRAINT [PK_UserTaskPriority] PRIMARY KEY ([UserTaskPriorityID]),

CONSTRAINT [FK_UserTaskPriority_User_LastEditedBy] FOREIGN KEY ([LastEditedBy]) REFERENCES [Identity].[User] ([UserID]),

PERIOD FOR SYSTEM_TIME (ValidFrom, ValidTo)

) WITH (SYSTEM_VERSIONING = ON (HISTORY_TABLE = [Application].[UserTaskPriorityHistory]));

A UserTask needs a status. Has the UserTask been assigned? Is it waiting for others? Has it been defered? Has it been

completed? We add a table [Application].[UserTaskStatus].

CREATE TABLE [Application].[UserTaskStatus](

[UserTaskStatusID] INT NOT NULL,

[Name] NVARCHAR(50) NOT NULL,

[RowVersion] ROWVERSION NULL,

[LastEditedBy] INT NOT NULL,

[ValidFrom] DATETIME2 (7) GENERATED ALWAYS AS ROW START NOT NULL,

[ValidTo] DATETIME2 (7) GENERATED ALWAYS AS ROW END NOT NULL,

CONSTRAINT [PK_UserTaskStatus] PRIMARY KEY ([UserTaskStatusID]),

CONSTRAINT [FK_UserTaskStatus_User_LastEditedBy] FOREIGN KEY ([LastEditedBy]) REFERENCES [Identity].[User] ([UserID]),

PERIOD FOR SYSTEM_TIME (ValidFrom, ValidTo)

) WITH (SYSTEM_VERSIONING = ON (HISTORY_TABLE = [Application].[UserTaskStatusHistory]));

A user may be part of a Team, so we can for example make a User a member of a Team, and we can

assign a viewer permission from a Team to a UserTask.

CREATE TABLE [Application].[Team](

[TeamID] INT CONSTRAINT [DF_Application_Team_TeamID] DEFAULT (NEXT VALUE FOR [Application].[sq_Team]) NOT NULL,

[Name] NVARCHAR(255) NOT NULL,

[Description] NVARCHAR(2000) NOT NULL,

[RowVersion] ROWVERSION NULL,

[LastEditedBy] INT NOT NULL,

[ValidFrom] DATETIME2 (7) GENERATED ALWAYS AS ROW START NOT NULL,

[ValidTo] DATETIME2 (7) GENERATED ALWAYS AS ROW END NOT NULL,

CONSTRAINT [PK_Team] PRIMARY KEY ([TeamID]),

CONSTRAINT [FK_Team_User_LastEditedBy] FOREIGN KEY ([LastEditedBy]) REFERENCES [Identity].[User] ([UserID]),

PERIOD FOR SYSTEM_TIME (ValidFrom, ValidTo)

) WITH (SYSTEM_VERSIONING = ON (HISTORY_TABLE = [Application].[TeamHistory]));

A user may also be part of an Organization, so we can for example make a User a member of an Organization, and we can

assign a viewer permission from an Organization to a UserTask.

CREATE TABLE [Application].[Organization](

[OrganizationID] INT CONSTRAINT [DF_Application_Organization_OrganizationID] DEFAULT (NEXT VALUE FOR [Application].[sq_Organization]) NOT NULL,

[Name] NVARCHAR(255) NOT NULL,

[Description] NVARCHAR(2000) NOT NULL,

[RowVersion] ROWVERSION NULL,

[LastEditedBy] INT NOT NULL,

[ValidFrom] DATETIME2 (7) GENERATED ALWAYS AS ROW START NOT NULL,

[ValidTo] DATETIME2 (7) GENERATED ALWAYS AS ROW END NOT NULL,

CONSTRAINT [PK_Organization] PRIMARY KEY ([OrganizationID]),

CONSTRAINT [FK_Organization_User_LastEditedBy] FOREIGN KEY ([LastEditedBy]) REFERENCES [Identity].[User] ([UserID]),

PERIOD FOR SYSTEM_TIME (ValidFrom, ValidTo)

) WITH (SYSTEM_VERSIONING = ON (HISTORY_TABLE = [Application].[OrganizationHistory]));

Stored Procedures

As mentioned, all our tables are system-versioned, and we need to deactivate them before running our initial

inserts. So we add a Stored Procedure [Application].[usp_TemporalTables_DeactivateTemporalTables] to deactivate

the versioning.

CREATE PROCEDURE [Application].[usp_TemporalTables_DeactivateTemporalTables]

AS BEGIN

IF OBJECTPROPERTY(OBJECT_ID('[Application].[UserTask]'), 'TableTemporalType') = 2

BEGIN

PRINT 'Deactivate Temporal Table for [Application].[UserTask]'

ALTER TABLE [Application].[UserTask] SET (SYSTEM_VERSIONING = OFF);

ALTER TABLE [Application].[UserTask] DROP PERIOD FOR SYSTEM_TIME;

END

IF OBJECTPROPERTY(OBJECT_ID('[Application].[UserTaskPriority]'), 'TableTemporalType') = 2

BEGIN

PRINT 'Deactivate Temporal Table for [Application].[UserTaskPriority]'

ALTER TABLE [Application].[UserTaskPriority] SET (SYSTEM_VERSIONING = OFF);

ALTER TABLE [Application].[UserTaskPriority] DROP PERIOD FOR SYSTEM_TIME;

END

IF OBJECTPROPERTY(OBJECT_ID('[Application].[UserTaskStatus]'), 'TableTemporalType') = 2

BEGIN

PRINT 'Deactivate Temporal Table for [Application].[UserTaskStatus]'

ALTER TABLE [Application].[UserTaskStatus] SET (SYSTEM_VERSIONING = OFF);

ALTER TABLE [Application].[UserTaskStatus] DROP PERIOD FOR SYSTEM_TIME;

END

IF OBJECTPROPERTY(OBJECT_ID('[Application].[Organization]'), 'TableTemporalType') = 2

BEGIN

PRINT 'Deactivate Temporal Table for [Application].[Organization]'

ALTER TABLE [Application].[Organization] SET (SYSTEM_VERSIONING = OFF);

ALTER TABLE [Application].[Organization] DROP PERIOD FOR SYSTEM_TIME;

END

IF OBJECTPROPERTY(OBJECT_ID('[Application].[Team]'), 'TableTemporalType') = 2

BEGIN

PRINT 'Deactivate Temporal Table for [Application].[Team]'

ALTER TABLE [Application].[Team] SET (SYSTEM_VERSIONING = OFF);

ALTER TABLE [Application].[Team] DROP PERIOD FOR SYSTEM_TIME;

END

END

After running the Post-Deployment Scripts, we are reactivating the System-Versioning by executing

the Stored Procedure [Application].[usp_TemporalTables_ReactivateTemporalTables].

CREATE PROCEDURE [Application].[usp_TemporalTables_ReactivateTemporalTables]

AS BEGIN

IF OBJECTPROPERTY(OBJECT_ID('[Application].[UserTask]'), 'TableTemporalType') = 0

BEGIN

PRINT 'Reactivate Temporal Table for [Application].[UserTask]'

ALTER TABLE [Application].[UserTask] ADD PERIOD FOR SYSTEM_TIME([ValidFrom], [ValidTo]);

ALTER TABLE [Application].[UserTask] SET (SYSTEM_VERSIONING = ON (HISTORY_TABLE = [Application].[UserTaskHistory], DATA_CONSISTENCY_CHECK = ON));

END

IF OBJECTPROPERTY(OBJECT_ID('[Application].[UserTaskPriority]'), 'TableTemporalType') = 0

BEGIN

PRINT 'Reactivate Temporal Table for [Application].[UserTaskPriority]'

ALTER TABLE [Application].[UserTaskPriority] ADD PERIOD FOR SYSTEM_TIME([ValidFrom], [ValidTo]);

ALTER TABLE [Application].[UserTaskPriority] SET (SYSTEM_VERSIONING = ON (HISTORY_TABLE = [Application].[UserTaskPriorityHistory], DATA_CONSISTENCY_CHECK = ON));

END

IF OBJECTPROPERTY(OBJECT_ID('[Application].[UserTaskStatus]'), 'TableTemporalType') = 0

BEGIN

PRINT 'Reactivate Temporal Table for [Application].[UserTaskStatus]'

ALTER TABLE [Application].[UserTaskStatus] ADD PERIOD FOR SYSTEM_TIME([ValidFrom], [ValidTo]);

ALTER TABLE [Application].[UserTaskStatus] SET (SYSTEM_VERSIONING = ON (HISTORY_TABLE = [Application].[UserTaskStatusHistory], DATA_CONSISTENCY_CHECK = ON));

END

IF OBJECTPROPERTY(OBJECT_ID('[Application].[Organization]'), 'TableTemporalType') = 0

BEGIN

PRINT 'Reactivate Temporal Table for [Application].[Organization]'

ALTER TABLE [Application].[Organization] ADD PERIOD FOR SYSTEM_TIME([ValidFrom], [ValidTo]);

ALTER TABLE [Application].[Organization] SET (SYSTEM_VERSIONING = ON (HISTORY_TABLE = [Application].[OrganizationHistory], DATA_CONSISTENCY_CHECK = ON));

END

IF OBJECTPROPERTY(OBJECT_ID('[Application].[Team]'), 'TableTemporalType') = 0

BEGIN

PRINT 'Reactivate Temporal Table for [Application].[Team]'

ALTER TABLE [Application].[Team] ADD PERIOD FOR SYSTEM_TIME([ValidFrom], [ValidTo]);

ALTER TABLE [Application].[Team] SET (SYSTEM_VERSIONING = ON (HISTORY_TABLE = [Application].[TeamHistory], DATA_CONSISTENCY_CHECK = ON));

END

END

Post Deployment Scripts

Post-Deployment Scripts are used to seed the database with initial data. A User is needed to do anything in our system,

the UserTaskPriority and UserTaskStatus tables need to have values, that map to an applications enumeration.

The Script.PostDeployment1.sql script starts by deactivating the System-Versioning on the tables and then runs

the scripts for creating data in the [Identity] and [Application] schema.

/*

Post-Deployment Script Template

--------------------------------------------------------------------------------------

This file contains SQL statements that will be appended to the build script.

Use SQLCMD syntax to include a file in the post-deployment script.

Example: :r .\myfile.sql

Use SQLCMD syntax to reference a variable in the post-deployment script.

Example: :setvar TableName MyTable

SELECT * FROM [$(TableName)]

--------------------------------------------------------------------------------------

*/

/*

We need to deactivate all Temporal Tables before the initial data load.

*/

EXEC [Identity].[usp_TemporalTables_DeactivateTemporalTables]

GO

EXEC [Application].[usp_TemporalTables_DeactivateTemporalTables]

GO

/*

Set the initial data for the [Identity] schema

*/

:r .\Identity\pds-100-ins-identity-users.sql

GO

:r .\Identity\pds-110-ins-identity-roles.sql

GO

:r .\Identity\pds-120-ins-identity-relation-tuples.sql

GO

/*

Set the initial data for the [Application] schema

*/

:r .\Application\pds-100-ins-application-task-priority.sql

GO

:r .\Application\pds-110-ins-application-task-status.sql

GO

:r .\Application\pds-120-ins-application-user-task.sql

GO

:r .\Application\pds-130-ins-application-organization.sql

GO

:r .\Application\pds-140-ins-application-team.sql

GO

/*

We need to reactivate all Temporal Tables after the initial data load.

*/

EXEC [Identity].[usp_TemporalTables_ReactivateTemporalTables]

GO

EXEC [Application].[usp_TemporalTables_ReactivateTemporalTables]

GO

We want to be able to re-run the Post-Deployment Scripts, without running into constraint violations, such

as Primary Key conflicts. So we are using a MERGE statement, to only insert data, if it doesn't exist yet.

An example is the initial data for the [Identity].[User] table.

PRINT 'Inserting [Identity].[User] ...'

-----------------------------------------------

-- Global Parameters

-----------------------------------------------

DECLARE @ValidFrom datetime2(7) = '20130101'

DECLARE @ValidTo datetime2(7) = '99991231 23:59:59.9999999'

-----------------------------------------------

-- [Identity].[User]

-----------------------------------------------

MERGE INTO [Identity].[User] AS [Target]

USING (VALUES

(1, 'Data Conversion Only', 'Data Conversion Only', 0, NULL, NULL, 1, @ValidFrom, @ValidTo)

,(2, 'Philipp Wagner', 'Philipp Wagner', 1, 'philipp@bytefish.de', 'AQAAAAIAAYagAAAAELbMFL9utkwA7FK4QoUCZEK/jPiHhTMzuFllrszW7FuCJBHjLVBCWXJCuFFJyRllYg==', 1, @ValidFrom, @ValidTo) --5!F25GbKwU3P

,(3, 'John Doe', 'John Doe', 0, 'john@doe.localhost', NULL, 1, @ValidFrom, @ValidTo)

,(4, 'Max Powers', 'Max Powers', 0, 'max@powers.localhost', NULL, 1, @ValidFrom, @ValidTo)

,(5, 'James Bond', '007', 0, 'james@bond.localhost', NULL, 1, @ValidFrom, @ValidTo)

,(6, 'John Connor', 'John Connor', 0, 'john@connor.localhost', NULL, 1, @ValidFrom, @ValidTo)

,(7, 'Max Mustermann', 'Max Mustermann', 1, 'max@mustermann.local', 'AQAAAAIAAYagAAAAELbMFL9utkwA7FK4QoUCZEK/jPiHhTMzuFllrszW7FuCJBHjLVBCWXJCuFFJyRllYg==', 1, @ValidFrom, @ValidTo) --5!F25GbKwU3P

) AS [Source]([UserID], [FullName], [PreferredName], [IsPermittedToLogon], [LogonName], [HashedPassword], [LastEditedBy], [ValidFrom], [ValidTo])

ON ([Target].[UserID] = [Source].[UserID])

WHEN NOT MATCHED BY TARGET THEN

INSERT

([UserID], [FullName], [PreferredName], [IsPermittedToLogon], [LogonName], [HashedPassword], [LastEditedBy], [ValidFrom], [ValidTo])

VALUES

([Source].[UserID], [Source].[FullName], [Source].[PreferredName], [Source].[IsPermittedToLogon], [Source].[LogonName], [Source].[HashedPassword], [Source].[LastEditedBy], [Source].[ValidFrom], [Source].[ValidTo]);

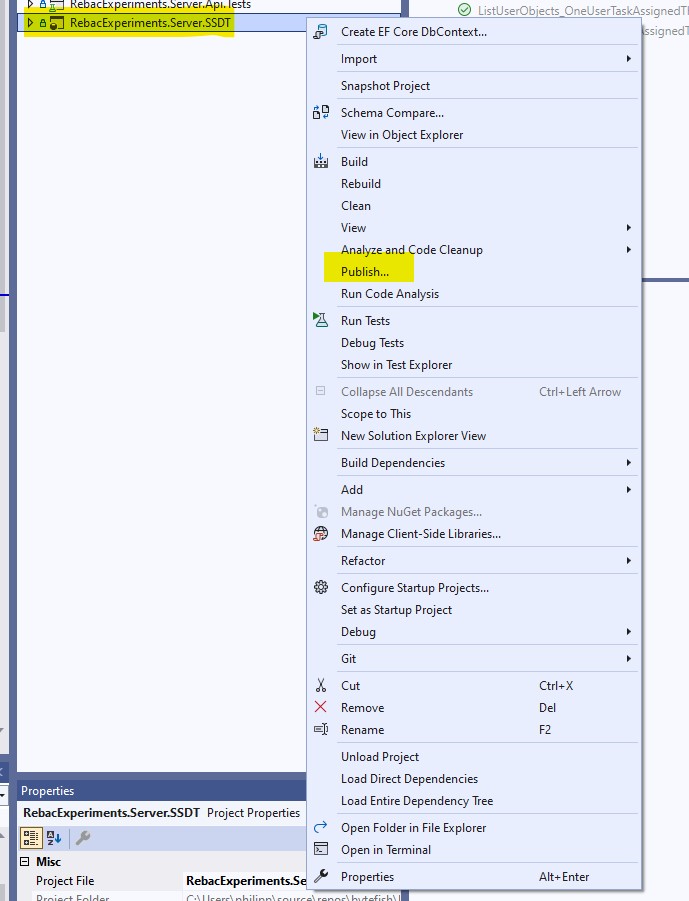

Deploying the Database

The database is done, so we can now deploy it.

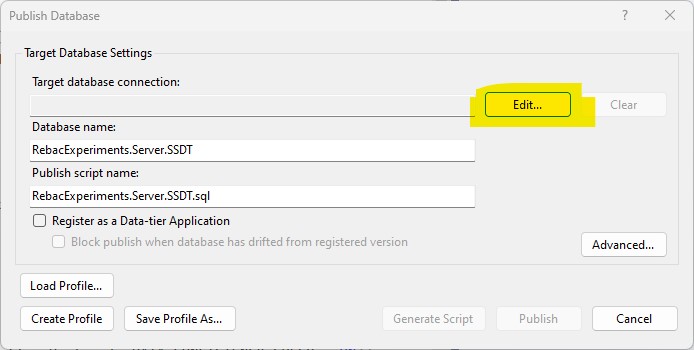

You start by doing a Right Click on the database project and select Publish ....

In the dialog we can see, that the Target Database Connection is empty, and we don't have a database yet. So we

create one by clicking the Edit ... button.

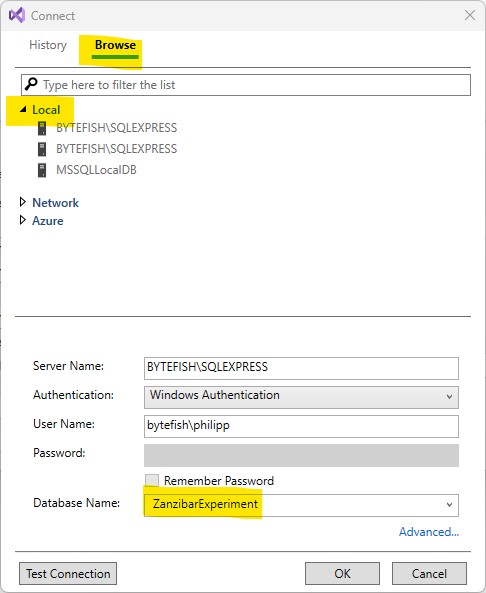

I want to have it running on a local database, so I am selecting Local in the Tree View, and select the SQLEXPRESS

instance. The Server Name and so on gets automatically filled, and we just need to set our database name. I have named

it ZanzibarExperiment, because it's the applications target database name.

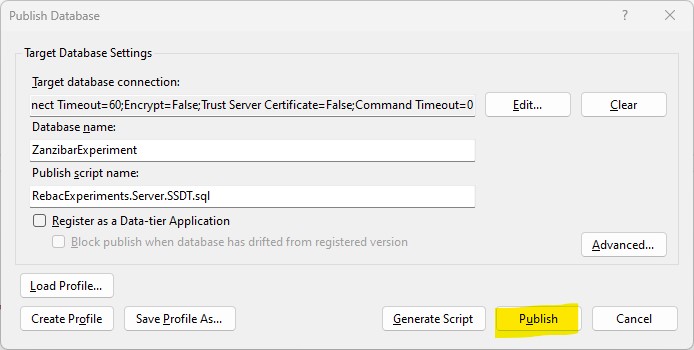

As we can see, the Target Database Connection is now automatically set, and we just need to click the Publish button.

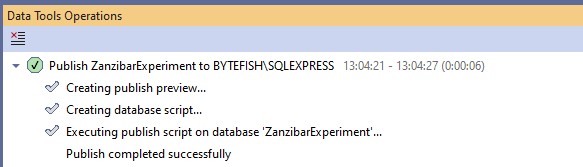

The database is now being published and we can inspect the Data Tools Operation pane, to see if it worked.

Congratulations!

ASP.NET Core Backend with Relationship-based Access Control

Prerequisites

There are some prerequisites, that we want to take a look at. It's because... for every project I have started I need to gather all the information from Microsoft Learn, GitHub or my previous projects, and its good to have it all here.

Logging

Logging is among the most important things, because your application will fail and you really need to be able to investigate the reasons.

ASP.NET Core now comes with the Microsoft.Extensions.Logging abstractions, so you can plug in any logging framework you

like. I like Serilog a lot and use it in this example, but I've also had a good experience with NLog, so pick your

favorite framework. By using the Microsoft.Extensions.Logging we can swap the Logging framework anyways.

For Serilog we start by adding the Serilog Core and its Microsoft.Extensions.Logging integration.

<PackageReference Include="Serilog" Version="3.0.1" />

<PackageReference Include="Serilog.Extensions.Logging" Version="7.0.0" />

In this example we configure a Console and a File sink, so we also need to add the following NuGet packages:

<PackageReference Include="Serilog.Sinks.Console" Version="4.1.0" />

<PackageReference Include="Serilog.Sinks.File" Version="5.0.0" />

And we want to enrich the Logs with the Machine and Environment Name, so we are adding a dependency on:

<PackageReference Include="Serilog.Enrichers.Environment" Version="2.3.0" />

At the beginning of the Program.cs (or where your Startup is) you configure and create a Log.Logger instance.

// We will log to %LocalAppData%/RebacExperiments to store the Logs, so it doesn't need to be configured

// to a different path, when you run it on your machine.

string logDirectory = Path.Combine(Environment.GetFolderPath(Environment.SpecialFolder.LocalApplicationData), "RebacExperiments");

// We are writing with RollingFileAppender using a daily rotation, and we want to have the filename as

// as "LogRebacExperiments-{Date}.log", the "{Date}" placeholder will be replaced by Serilog itself.

string logFilePath = Path.Combine(logDirectory, "LogRebacExperiments-.log");

// Configure the Serilog Logger. This Serilog Logger will be passed

// to the Microsoft.Extensions.Logging LoggingBuilder using the

// LoggingBuilder#AddSerilog(...) extension.

Log.Logger = new LoggerConfiguration()

.Enrich.FromLogContext()

.Enrich.WithMachineName()

.Enrich.WithEnvironmentName()

.WriteTo.Console(theme: AnsiConsoleTheme.Code)

.WriteTo.File(logFilePath, rollingInterval: RollingInterval.Day)

.CreateLogger();

We can then use the LoggingBuilder#AddSerilog extension method to register Serilog with the Microsoft.Extensions.Logging framework.

// Logging

builder.Services.AddLogging(loggingBuilder => loggingBuilder.AddSerilog(dispose: true));

I suggest to wrap the entire Starup code inside a fat try block, so any error throwing during startup is caught and

logged to a console or the file sink.

// ...

try

{

var builder = WebApplication.CreateBuilder(args);

// Add services to the container

builder.Services.AddSingleton<IUserService, UserService>();

// ...

var app = builder.Build();

// Configure the HTTP request pipeline.

app.UseHttpsRedirection();

// ...

app.Run();

}

catch(Exception exception)

{

Log.Fatal(exception, "An unhandeled exception occured.");

}

finally

{

// Wait 0.5 seconds before closing and flushing, to gather the last few logs.

await Task.Delay(TimeSpan.FromMilliseconds(500));

await Log.CloseAndFlushAsync();

}

In your application you would then inject a Microsoft.Extensions.Logging.ILogger abstraction to your services, which

is going to be created by the dependency injection container for you.

// Licensed under the MIT license. See LICENSE file in the project root for full license information.

// ...

namespace RebacExperiments.Server.Api.Controllers

{

public class AuthenticationController : ControllerBase

{

private readonly ILogger<AuthenticationController> _logger;

public AuthenticationController(ILogger<AuthenticationController> logger)

{

_logger = logger;

}

// ...

}

}

You shouldn't use the Log.Logger singleton directly in your code, as this would make you take a hard dependency on Serilog. Just know,

that it's totally possible to do so, if you ever need to take a shortcut for your logging or need features only the Serilog logger

provides.

I have once worked in a .NET Framework project, which used log4net. And the log4net ILog abstraction comes with

properties like IsDebugEnabled or IsErrorEnabled to check the Log Level. That's useful, because you sometimes

need to prepare a log message, like transforming a list of objects into something human readable, only in a

Debug log level.

// Licensed under the MIT license. See LICENSE file in the project root for full license information.

using System.Runtime.CompilerServices;

namespace RebacExperiments.Server.Api.Infrastructure.Logging

{

public static class LoggerExtensions

{

public static bool IsDebugEnabled<TLoggerType>(this ILogger<TLoggerType> logger)

{

return logger.IsEnabled(LogLevel.Debug);

}

public static bool IsCriticalEnabled<TLoggerType>(this ILogger<TLoggerType> logger)

{

return logger.IsEnabled(LogLevel.Critical);

}

public static bool IsErrorEnabled<TLoggerType>(this ILogger<TLoggerType> logger)

{

return logger.IsEnabled(LogLevel.Error);

}

public static bool IsInformationEnabled<TLoggerType>(this ILogger<TLoggerType> logger)

{

return logger.IsEnabled(LogLevel.Information);

}

public static bool IsTraceEnabled<TLoggerType>(this ILogger<TLoggerType> logger)

{

return logger.IsEnabled(LogLevel.Trace);

}

public static bool IsWarningEnabled<TLoggerType>(this ILogger<TLoggerType> logger)

{

return logger.IsEnabled(LogLevel.Warning);

}

// ...

}

}

And sometimes you want to understand the flow of your application, when an error occurs... but you can't really debug against the

production system. That's where a ILogger<TLoggerType>#TraceMethodEntry extension method comes in handy, to write logs in

Trace mode and get more information.

// Licensed under the MIT license. See LICENSE file in the project root for full license information.

using System.Runtime.CompilerServices;

namespace RebacExperiments.Server.Api.Infrastructure.Logging

{

public static class LoggerExtensions

{

// ...

public static void TraceMethodEntry<TLoggerType>(this ILogger<TLoggerType> logger, [CallerFilePath] string? callerFilePath = null, [CallerLineNumber] int? callerLineNumber = null, [CallerMemberName] string callerMemberName = "")

{

if (logger.IsTraceEnabled())

{

logger.LogTrace("Method Entry (CallerFilePath = {CallerFilePath}, CallerLineNumber = {CallerLineNumber}, CallerMemberName = {CallerMemberName})",

callerFilePath, callerLineNumber, callerMemberName);

}

}

}

}

Don't try to be to clever and just add the method call at the top of every method invocation, that you'd like to see in Trace mode. No AOP with me!

// Licensed under the MIT license. See LICENSE file in the project root for full license information.

// ...

namespace RebacExperiments.Server.Api.Services

{

public class UserService : IUserService

{

private readonly ILogger<UserService> _logger;

private readonly IPasswordHasher _passwordHasher;

public UserService(ILogger<UserService> logger, IPasswordHasher passwordHasher)

{

_logger = logger;

_passwordHasher = passwordHasher;

}

public async Task<List<Claim>> GetClaimsAsync(ApplicationDbContext context, string username, string password, CancellationToken cancellationToken)

{

_logger.TraceMethodEntry();

...

}

}

}

That's it for logging.

Error Handling

Something, that you should decide on early in a project is your error handling. How do you want to communicate errors to the programmer and the user? I think, that using Exceptions should be the preferred way of dealing with Errors in .NET.

For a user to make sense of errors we need ErrorCodes, that can be looked up in a documentation or somewhere.

// Licensed under the MIT license. See LICENSE file in the project root for full license information.

namespace RebacExperiments.Server.Api.Infrastructure.Errors

{

/// <summary>

/// Error Codes used in the Application.

/// </summary>

public static class ErrorCodes

{

/// <summary>

/// General Authentication Error.

/// </summary>

public const string AuthenticationFailed = "Auth:000001";

/// <summary>

/// Entity has not been found.

/// </summary>

public const string EntityNotFound = "Entity:000001";

/// <summary>

/// Access to Entity has been unauthorized.

/// </summary>

public const string EntityUnauthorized = "Entity:000002";

/// <summary>

/// Entity has been modified concurrently.

/// </summary>

public const string EntityConcurrencyFailure = "Entity:000003";

}

}

All exceptions in the application then derive from an abstract ApplicationErrorException, which requires you

to define the Error Code for the type of Exception.

// Licensed under the MIT license. See LICENSE file in the project root for full license information.

namespace RebacExperiments.Server.Api.Infrastructure.Exceptions

{

/// <summary>

/// Base Exception for the Application.

/// </summary>

public abstract class ApplicationErrorException : Exception

{

/// <summary>

/// Gets the Error Code.

/// </summary>

public abstract string ErrorCode { get; }

/// <summary>

/// Gets the Error Message.

/// </summary>

public abstract string ErrorMessage { get; }

protected ApplicationErrorException(string? message, Exception? innerException)

: base(message, innerException)

{

}

}

}

All specific exceptions in the application then derive from the ApplicationErrorException, such as an EntityUnauthorizedAccessException.

The EntityUnauthorizedAccessException is thrown when you try to access an entity you are not authorized to access. To investigate

such issues, the exception contains all required data, such as the Entity to be accessed and the User ID.

// Licensed under the MIT license. See LICENSE file in the project root for full license information.

using RebacExperiments.Server.Api.Infrastructure.Errors;

namespace RebacExperiments.Server.Api.Infrastructure.Exceptions

{

public class EntityUnauthorizedAccessException : ApplicationErrorException

{

/// <summary>

/// Gets or sets an error code.

/// </summary>

public override string ErrorCode => ErrorCodes.EntityNotFound;

/// <summary>

/// Gets or sets an error code.

/// </summary>

public override string ErrorMessage => $"EntityUnauthorizedAccess (User = {UserId}, Entity = {EntityName}, EntityID = {EntityId})";

/// <summary>

/// Gets or sets the User ID.

/// </summary>

public required int UserId { get; set; }

/// <summary>

/// Gets or sets the Entity Name.

/// </summary>

public required string EntityName { get; set; }

/// <summary>

/// Gets or sets the EntityId.

/// </summary>

public required int EntityId { get; set; }

/// <summary>

/// Creates a new <see cref="EntityNotFoundException"/>.

/// </summary>

/// <param name="message">Error Message</param>

/// <param name="innerException">Reference to the Inner Exception</param>

public EntityUnauthorizedAccessException(string? message = null, Exception? innerException = null)

: base(message, innerException)

{

}

}

}

And how do you return the Errors to the caller of the API?

ASP.NET Core comes with ProblemDetails and its surrounding infrastructure. The ProblemDetails stems from the

RFC 7807, which tries to define [...] a "problem detail" as a way to carry machine-readable details of

errors in a HTTP response to avoid the need to define new error response formats for HTTP APIs.

I would suggest to use them.

I think for this application we can get away with an ApplicationErrorHandler, that handles the specific exceptions

and transforms them into an ObjectResult. A younger me would have tried to make it as generic as possible. These

days, I prefer doing the stuff in the most straightforward way.

// Licensed under the MIT license. See LICENSE file in the project root for full license information.

// ...

namespace RebacExperiments.Server.Api.Infrastructure.Errors

{

/// <summary>

/// Options for the <see cref="ApplicationErrorHandler"/>.

/// </summary>

public class ApplicationErrorHandlerOptions

{

/// <summary>

/// Gets or sets the option to include the Exception Details in the response.

/// </summary>

public bool IncludeExceptionDetails { get; set; } = false;

}

/// <summary>

/// Handles errors returned by the application.

/// </summary>

public class ApplicationErrorHandler

{

private readonly ILogger<ApplicationErrorHandler> _logger;

private readonly ApplicationErrorHandlerOptions _options;

public ApplicationErrorHandler(ILogger<ApplicationErrorHandler> logger, IOptions<ApplicationErrorHandlerOptions> options)

{

_logger = logger;

_options = options.Value;

}

public ObjectResult HandleInvalidModelState(HttpContext httpContext, ModelStateDictionary modelStateDictionary)

{

_logger.TraceMethodEntry();

var details = new ValidationProblemDetails(modelStateDictionary)

{

Title = "Validation Failed",

Type = "ValidationError",

Status = (int)HttpStatusCode.BadRequest,

Instance = httpContext.Request.Path,

};

details.Extensions.Add("error-code", ErrorCodes.ValidationFailed);

details.Extensions.Add("trace-id", httpContext.TraceIdentifier);

return new ObjectResult(details)

{

ContentTypes = { "application/problem+json" },

StatusCode = (int)HttpStatusCode.BadRequest,

};

}

public ObjectResult HandleException(HttpContext httpContext, Exception exception)

{

_logger.TraceMethodEntry();

_logger.LogError(exception, "Call to '{RequestPath}' failed due to an Exception", httpContext.Request.Path);

return exception switch

{

AuthenticationFailedException e => HandleAuthenticationException(httpContext, e),

EntityConcurrencyException e => HandleEntityConcurrencyException(httpContext, e),

EntityNotFoundException e => HandleEntityNotFoundException(httpContext, e),

EntityUnauthorizedAccessException e => HandleEntityUnauthorizedException(httpContext, e),

Exception e => HandleSystemException(httpContext, e),

};

}

private ObjectResult HandleAuthenticationException(HttpContext httpContext, AuthenticationFailedException e)

{

_logger.TraceMethodEntry();

var details = new ProblemDetails

{

Title = e.ErrorMessage,

Type = nameof(AuthenticationFailedException),

Status = (int)HttpStatusCode.Unauthorized,

Instance = httpContext.Request.Path,

};

details.Extensions.Add("error-code", ErrorCodes.AuthenticationFailed);

details.Extensions.Add("trace-id", httpContext.TraceIdentifier);

AddExceptionDetails(details, e);

return new ObjectResult(details)

{

ContentTypes = { "application/problem+json" },

StatusCode = (int)HttpStatusCode.Unauthorized,

};

}

// ...

private ObjectResult HandleSystemException(HttpContext httpContext, Exception e)

{

_logger.TraceMethodEntry();

var details = new ProblemDetails

{

Title = "An Internal Server Error occured",

Status = (int)HttpStatusCode.InternalServerError,

Instance = httpContext.Request.Path,

};

details.Extensions.Add("error-code", ErrorCodes.InternalServerError);

details.Extensions.Add("trace-id", httpContext.TraceIdentifier);

AddExceptionDetails(details, e);

return new ObjectResult(details)

{

ContentTypes = { "application/problem+json" },

StatusCode = (int)HttpStatusCode.InternalServerError,

};

}

private void AddExceptionDetails(ProblemDetails details, Exception e)

{

_logger.TraceMethodEntry();

if(_options.IncludeExceptionDetails)

{

details.Extensions.Add("exception", e.ToString());

}

}

}

}

"You could have used a ProblemDetailsFactory, Exception Handler Lambdas!" I hear you say. ASP.NET Core comes with a

ProblemDetailsFactory, Exception Handler Lambdas, ErrorHandlerMiddleware, IExceptionHandler, a ProblemDetailsService

and whatnot... I don't understand, what's the best approach and I don't want to deal with it.

In the Controllers we then pass the ApplicationErrorHandler to the Constructor and can use it to handle an invalid ModelState

and handle any Exception, that's being thrown.

// Licensed under the MIT license. See LICENSE file in the project root for full license information.

// ...

namespace RebacExperiments.Server.Api.Controllers

{

[Route("UserTasks")]

public class UserTasksController : ControllerBase

{

private readonly ILogger<UserTasksController> _logger;

private readonly ApplicationErrorHandler _applicationErrorHandler;

public UserTasksController(ILogger<UserTasksController> logger, ApplicationErrorHandler applicationErrorHandler)

{

_logger = logger;

_applicationErrorHandler = applicationErrorHandler;

}

[HttpPut]

[Route("{id}")]

[Authorize(Policy = Policies.RequireUserRole)]

[EnableRateLimiting(Policies.PerUserRatelimit)]

public async Task<IActionResult> PutUserTask([FromServices] ApplicationDbContext context, [FromServices] IUserTaskService userTaskService, [FromBody] UserTask userTask, CancellationToken cancellationToken)

{

_logger.TraceMethodEntry();

if (!ModelState.IsValid)

{

return _applicationErrorHandler.HandleInvalidModelState(HttpContext, ModelState);

}

try

{

await userTaskService.UpdateUserTaskAsync(context, userTask, User.GetUserId(), cancellationToken);

return Ok(userTask);

}

catch (Exception ex)

{

return _applicationErrorHandler.HandleException(HttpContext, ex);

}

}

// ...

}

}

And that's it for the Error Handling.

Integration Tests with EntityFramework Core and a Database

Generations of Software developers have learnt, that tests are important. And it's right. Tests are your safety net for refactorings, tests make sure you don't accidentally introduce regressions. By all means: Write Tests!

But as a young software developer I fell into the trap, thinking everything needs an interface. Everything needs to

be mocked. We need to reach our code coverage metrics! This led to what I call "a spider web of unit tests", that tested

the codes structure, rather than its functionality. Every refactoring was painful.

These days I think what really matters the most are integration tests. Is the data I am writing actually written to the real database? Did

EntityFramework Core translate the IQueryable correctly to SQL? Did it map the results correctly back and forth between the SQL function and

the application?

I think the easiest way to write Integration Tests with EntityFramework Core is to use something like the TransactionalTestBase from

below. The idea is to start the Transaction in the setup for a test and to dispose it in the teardown, without a commit. This leaves your

database in a consistent state, with all changes being rolled back at the end of each test.

// Licensed under the MIT license. See LICENSE file in the project root for full license information.

// ...

namespace RebacExperiments.Server.Api.Tests

{

/// <summary>

/// Will be used by all integration tests, that need an <see cref="ApplicationDbContext"/>.

/// </summary>

public class TransactionalTestBase

{

/// <summary>

/// We can assume the Configuration has been initialized, when the Tests

/// are run. So we inform the compiler, that this field is intentionally

/// left uninitialized.

/// </summary>

protected IConfiguration _configuration = null!;

/// <summary>

/// We can assume the DbContext has been initialized, when the Tests

/// are run. So we inform the compiler, that this field is intentionally

/// left uninitialized.

/// </summary>

protected ApplicationDbContext _applicationDbContext = null!;

public TransactionalTestBase()

{

_configuration = ReadConfiguration();

}

/// <summary>

/// Read the appsettings.json for the Test.

/// </summary>

/// <returns></returns>

private IConfiguration ReadConfiguration()

{

return new ConfigurationBuilder()

.AddJsonFile("appsettings.json")

.Build();

}

/// <summary>

/// The SetUp called by NUnit to start the transaction.

/// </summary>

/// <returns>An awaitable Task</returns>

[SetUp]

protected async Task Setup()

{

// Create a fresh DbContext for each test, because you don't want the

// Change Tracker to cache entities and pollute the test.

_applicationDbContext = GetApplicationDbContext(_configuration);

await OnSetupBeforeTransaction();

await _applicationDbContext.Database.BeginTransactionAsync(IsolationLevel.ReadCommitted, default);

await OnSetupInTransaction();

}

/// <summary>

/// The TearDown called by NUnit to rollback the transaction.

/// </summary>

/// <returns>An awaitable Task</returns>

[TearDown]

protected async Task Teardown()

{

await OnTearDownInTransaction();

await _applicationDbContext.Database.RollbackTransactionAsync(default);

await OnTearDownAfterTransaction();

await _applicationDbContext.DisposeAsync();

}

/// <summary>

/// Called before the transaction starts.

/// </summary>

/// <returns>An awaitable task</returns>

public virtual Task OnSetupBeforeTransaction()

{

return Task.CompletedTask;

}

/// <summary>

/// Called inside the transaction.

/// </summary>

/// <returns>An awaitable task</returns>

public virtual Task OnSetupInTransaction()

{

return Task.CompletedTask;

}

/// <summary>

/// Called before rolling back the transaction.

/// </summary>

/// <returns>An awaitable task</returns>

public virtual Task OnTearDownInTransaction()

{

return Task.CompletedTask;

}

/// <summary>

/// Called after transaction has been rolled back.

/// </summary>

/// <returns>An awaitable task</returns>

public virtual Task OnTearDownAfterTransaction()

{

return Task.CompletedTask;

}

/// <summary>

/// Builds an <see cref="ApplicationDbContext"/> based on a given Configuration. We

/// expect the Configuration to have a Connection String "ApplicationDatabase" to

/// be defined.

/// </summary>

/// <param name="configuration">A configuration provided by the appsettings.json</param>

/// <returns>An initialized <see cref="ApplicationDbContext"/></returns>

/// <exception cref="InvalidOperationException">Thrown when no Connection String "ApplicationDatabase" was found</exception>

private ApplicationDbContext GetApplicationDbContext(IConfiguration configuration)

{

var connectionString = configuration.GetConnectionString("ApplicationDatabase");

if (connectionString == null)

{

throw new InvalidOperationException($"No Connection String named 'ApplicationDatabase' found in appsettings.json");

}

return GetApplicationDbContext(connectionString);

}

/// <summary>

/// Builds an <see cref="ApplicationDbContext"/> based on a given Connection String

/// and enables sensitive data logging for eventual debugging.

/// </summary>

/// <param name="connectionString">Connection String to the Test database</param>

/// <returns>An initialized <see cref="ApplicationDbContext"/></returns>

private ApplicationDbContext GetApplicationDbContext(string connectionString)

{

var dbContextOptionsBuilder = new DbContextOptionsBuilder<ApplicationDbContext>().UseSqlServer(connectionString);

return new ApplicationDbContext(

logger: new NullLogger<ApplicationDbContext>(),

options: dbContextOptionsBuilder.Options);

}

}

}

Database Access with Entity Framework Core

The Go-To Data Access Framework for .NET is EntityFramework Core. There are strong opinions on EntityFramework Core and

OR-Mappers in general, I will keep mine to myself and use EntityFramework Core in this example. So here is what the

DbContext for the application looks like. I am calling it an ApplicationDbContext.

// Licensed under the MIT license. See LICENSE file in the project root for full license information.

using Microsoft.EntityFrameworkCore;

using RebacExperiments.Server.Api.Models;

namespace RebacExperiments.Server.Api.Infrastructure.Database

{

/// <summary>

/// A <see cref="DbContext"/> to query the database.

/// </summary>

public class ApplicationDbContext : DbContext

{

/// <summary>

/// Logger.

/// </summary>

internal ILogger<ApplicationDbContext> Logger { get; }

/// <summary>

/// Constructor.

/// </summary>

/// <param name="options">Options to configure the base <see cref="DbContext"/></param>

public ApplicationDbContext(ILogger<ApplicationDbContext> logger, DbContextOptions<ApplicationDbContext> options)

: base(options)

{

Logger = logger;

}

/// <summary>

/// Gets or sets the Users.

/// </summary>

public DbSet<User> Users { get; set; } = null!;

/// <summary>

/// Gets or sets the Roles.

/// </summary>

public DbSet<Role> Roles { get; set; } = null!;

/// <summary>

/// Gets or sets the UserTasks.

/// </summary>

public DbSet<UserTask> UserTasks { get; set; } = null!;

/// <summary>

/// Gets or sets the UserTasks.

/// </summary>

public DbSet<Team> Teams { get; set; } = null!;

/// <summary>

/// Gets or sets the UserTasks.

/// </summary>

public DbSet<Organization> Organizations { get; set; } = null!;

/// <summary>

/// Gets or sets the UserTasks.

/// </summary>

public DbSet<RelationTuple> RelationTuples { get; set; } = null!;

/// <summary>

/// List Objects.

/// </summary>

/// <param name="objectNamespace">Object Namespace</param>

/// <param name="objectRelation">Object Relation</param>

/// <param name="subjectNamespace">Subject Namespace</param>

/// <param name="subjectKey">Subject Key</param>

/// <returns></returns>

public IQueryable<RelationTuple> ListObjects(string objectNamespace, string objectRelation, string subjectNamespace, int subjectKey)

=> FromExpression(() => ListObjects(objectNamespace, objectRelation, subjectNamespace, subjectKey));

protected override void OnModelCreating(ModelBuilder modelBuilder)

{

// Add ListObjects Function, so we can use it in LINQ:

modelBuilder

.HasDbFunction(

methodInfo: typeof(ApplicationDbContext).GetMethod(nameof(ListObjects), new[] { typeof(string), typeof(string), typeof(string), typeof(int) })!,

builderAction: builder => builder

.HasSchema("Identity")

.HasName("tvf_RelationTuples_ListObjects"));

// Map the Sequences and Tables here ...

}

}

}

We will register the ApplicationDbContext in the Program.cs using the AddDbContext<TDbContext> extension:

// Database

builder.Services.AddDbContext<ApplicationDbContext>(options =>

{

var connectionString = builder.Configuration.GetConnectionString("ApplicationDatabase");

if (connectionString == null)

{

throw new InvalidOperationException("No ConnectionString named 'ApplicationDatabase' was found");

}

options

.EnableSensitiveDataLogging().UseSqlServer(connectionString);

});

Authentication using ASP.NET Core Cookie Authentication

We start by creating a UserService, which is used to create the Claims for given credentials. All passwords in the database

are hashed using a PasswordHasher. The PasswordHasher is a one to one adaption of the ASP.NET Core Identity package.

As you can see in the UserService, I am passing the ApplicationDbContext as a method parameter. Ugly signatures! But the

reasoning for it is simple: I want all my services to register as a Singleton. I don't want to materialize the EntityFramework

Core DbContext out of thin air, like so many tutorials suggest you to do.

// Licensed under the MIT license. See LICENSE file in the project root for full license information.

// ...

namespace RebacExperiments.Server.Api.Services

{

public class UserService : IUserService

{

private readonly ILogger<UserService> _logger;

private readonly IPasswordHasher _passwordHasher;

public UserService(ILogger<UserService> logger, IPasswordHasher passwordHasher)

{

_logger = logger;

_passwordHasher = passwordHasher;

}

public async Task<List<Claim>> GetClaimsAsync(ApplicationDbContext context, string username, string password, CancellationToken cancellationToken)

{

_logger.TraceMethodEntry();

var user = await context.Users

.AsNoTracking()

.FirstOrDefaultAsync(x => x.LogonName == username, cancellationToken);

if(user == null)

{

throw new AuthenticationFailedException();

}

if (!user.IsPermittedToLogon)

{

throw new AuthenticationFailedException();

}

// Verify hashed password in database against the provided password

var isVerifiedPassword = _passwordHasher.VerifyHashedPassword(user.HashedPassword, password);

if (!isVerifiedPassword)

{

throw new AuthenticationFailedException();

}

// Load the Roles from the List of Objects

var roles = await context

.ListUserObjects<Role>(user.Id, Relations.Member)

.AsNoTracking()

.ToListAsync(cancellationToken);

// Build the Claims for the ClaimsPrincipal

var claims = CreateClaims(user, roles);

return claims;

}

private List<Claim> CreateClaims(User user, List<Role> roles)

{

_logger.TraceMethodEntry();

var claims = new List<Claim>();

if (user.LogonName != null)

{

claims.Add(new Claim(ClaimTypes.NameIdentifier, user.LogonName));

claims.Add(new Claim(ClaimTypes.Email, user.LogonName));

}

// Default Claims:

claims.Add(new Claim(ClaimTypes.Sid, Convert.ToString(user.Id)));

claims.Add(new Claim(ClaimTypes.Name, Convert.ToString(user.PreferredName)));

// Roles:

foreach (var role in roles)

{

claims.Add(new Claim(ClaimTypes.Role, role.Name));

}

return claims;

}

}

}

There are many options to authenticate a user, a popular one is using JSON Web Tokens and have it stateless. For our Backend a Cookie Authentication is simple and totally sufficient, we own both the Server and Clients. But you might want to consider using Windows Authentication or moving that pesky authentication to an external provider, if you don't want to deal with it yourself.

We need to configure the Cookie Authentication in the Program.cs:

// Cookie Authentication

builder.Services

.AddAuthentication(CookieAuthenticationDefaults.AuthenticationScheme)

.AddCookie(options =>

{

options.Cookie.HttpOnly = true;

options.Cookie.SameSite = SameSiteMode.Lax; // We don't want to deal with CSRF Tokens

options.Events.OnRedirectToAccessDenied = (context) =>

{

context.Response.StatusCode = StatusCodes.Status403Forbidden;

return Task.CompletedTask;

};

options.Events.OnRedirectToLogin = (context) =>

{

context.Response.StatusCode = StatusCodes.Status401Unauthorized;

return Task.CompletedTask;

};

});

And we can then write a Controller AuthenticationController, that exposes a sign-in and sign-out method

to authenticate a user against our database.

// Licensed under the MIT license. See LICENSE file in the project root for full license information.

using Microsoft.AspNetCore.Authentication;

using Microsoft.AspNetCore.Authentication.Cookies;

using Microsoft.AspNetCore.Mvc;

using RebacExperiments.Server.Api.Dto;

using RebacExperiments.Server.Api.Infrastructure.Database;

using RebacExperiments.Server.Api.Infrastructure.Errors;

using RebacExperiments.Server.Api.Infrastructure.Logging;

using RebacExperiments.Server.Api.Services;

using System.Security.Claims;

namespace RebacExperiments.Server.Api.Controllers

{

[Route("Authentication")]

public class AuthenticationController : ControllerBase

{

private readonly ILogger<AuthenticationController> _logger;

private readonly ApplicationErrorHandler _applicationErrorHandler;

public AuthenticationController(ILogger<AuthenticationController> logger, ApplicationErrorHandler applicationErrorHandler)

{

_logger = logger;

_applicationErrorHandler = applicationErrorHandler;

}

[HttpPost]

[Route("sign-in")]

public async Task<IActionResult> SignInUser([FromServices] ApplicationDbContext context, [FromServices] IUserService userService, [FromBody] CredentialsDto credentials, CancellationToken cancellationToken)

{

_logger.TraceMethodEntry();

if (!ModelState.IsValid)

{

return _applicationErrorHandler.HandleInvalidModelState(HttpContext, ModelState);

}

try

{

// Create ClaimsPrincipal from Database

var userClaims = await userService.GetClaimsAsync(

context: context,

username: credentials.Username,

password: credentials.Password,

cancellationToken: cancellationToken);

// Create the ClaimsPrincipal

var claimsIdentity = new ClaimsIdentity(userClaims, CookieAuthenticationDefaults.AuthenticationScheme);

var claimsPrincipal = new ClaimsPrincipal(claimsIdentity);

// It's a valid ClaimsPrincipal, sign in

await HttpContext.SignInAsync(claimsPrincipal, new AuthenticationProperties { IsPersistent = credentials.RememberMe });

return Ok();

}

catch (Exception ex)

{

_logger.LogError(ex, "{ControllerAction} failed due to an Exception", nameof(SignInUser));

return _applicationErrorHandler.HandleException(HttpContext, ex);

}

}

[HttpPost]

[Route("sign-out")]

public async Task<IActionResult> SignOutUser()

{

_logger.TraceMethodEntry();

try

{

await HttpContext.SignOutAsync(CookieAuthenticationDefaults.AuthenticationScheme);

}

catch(Exception ex)

{

return _applicationErrorHandler.HandleException(HttpContext, ex);

}

return Ok();

}

}

}

Implementing the Relationship-based Access Control Code

CheckObject API

The function [Identity].[udf_RelationTuples_Check] checks, if a Subject (our User) is authorized to access an Object (our UserTask).

The easiest way to execute the [Identity].[udf_RelationTuples_Check] function was using the new DbContext#SqlQuery(...) method and

to just do a [Identity].[udf_RelationTuples_Check](...) SQL Query. We add the call in a static class ApplicationDbContextExtensions as

extensions methods.

// Licensed under the MIT license. See LICENSE file in the project root for full license information.

// ...

namespace RebacExperiments.Server.Api.Infrastructure.Database

{

/// <summary>

/// Extensions on the <see cref="ApplicationDbContext"/> to allow Relationship-based ACL.

/// </summary>

public static class ApplicationDbContextExtensions

{

/// <summary>